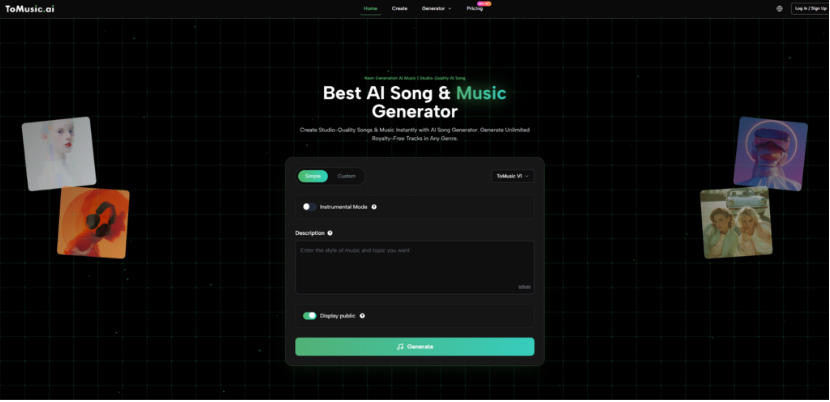

Creative work has always involved a tension between ideas and execution. Musicians often know what they want to express long before they know how to produce it. What’s changing now is that this gap is shrinking. With tools like AI Music Generator, the process is no longer about translating ideas into technical steps—it is about refining intent until the output aligns.

This shift introduces a different kind of challenge. Instead of asking “how do I make this sound,” users are now asking “how do I describe what I want clearly enough.” And that subtle change reshapes the entire creative process.

Why Describing Music Is Harder Than It Seems

At first glance, describing music feels simple. But in practice, language is ambiguous.

A phrase like:

- “warm acoustic track”

can be interpreted in multiple ways:

- soft guitar tones

- slow tempo

- minimal percussion

Ambiguity As Both Strength And Weakness

The system thrives on flexibility, but that flexibility also introduces variability.

Positive Side

- allows creative exploration

- produces unexpected results

Negative Side

- reduces predictability

- requires multiple iterations

In my testing, the most effective prompts tend to combine:

- emotion + context + structure

rather than relying on a single descriptor.

How The System Builds Musical Structure Internally

Even though the interface is simple, the internal process is layered.

Multi-Layer Generation Pipeline

The system constructs music in stages:

Stage 1: Semantic Parsing

Understanding:

- mood

- genre

- intent

Stage 2: Structural Planning

Determining:

- song length

- section layout

- dynamic flow

Stage 3: Audio Synthesis

Generating:

- melody

- harmony

- instrumentation

- vocals (if applicable)

This layered approach explains why outputs often feel cohesive rather than random.

Why Lyrics Introduce Constraints And Clarity

Adding lyrics changes the system’s behavior significantly.

With Lyrics to Music AI, the model must align:

- syllables with rhythm

- phrases with melody

- narrative with structure

Constraints That Improve Output

Interestingly, more constraints often lead to better results.

Rhythmic Anchoring

Lyrics define:

- timing

- pacing

Narrative Direction

The story guides:

- emotional progression

- intensity changes

Repetition Patterns

Repeated lines naturally form:

- choruses

- hooks

This makes lyric-based generation feel more structured than prompt-only generation.

The Real Workflow In Practice

While the interface appears simple, effective use follows a pattern.

Step 1: Start With A Clear Intent

Users either:

- describe a scene

- provide lyrics

- define a mood

Clarity here reduces randomness later.

Step 2: Apply Basic Constraints

Selecting:

- genre

- tempo

- vocal type

These settings narrow the solution space.

Step 3: Iterate Through Variations

Instead of editing:

- generate multiple outputs

- compare results

- refine descriptions

Iteration becomes the main creative tool.

Comparing Creative Control Across Methods

| Dimension | Manual Production | AI-Assisted Generation |

| Control Precision | Very high | Moderate |

| Speed | Slow | Fast |

| Learning Curve | Steep | Shallow |

| Creativity Style | Technical | Descriptive |

| Output Consistency | High | Variable |

This highlights an important idea:

control is traded for speed and accessibility

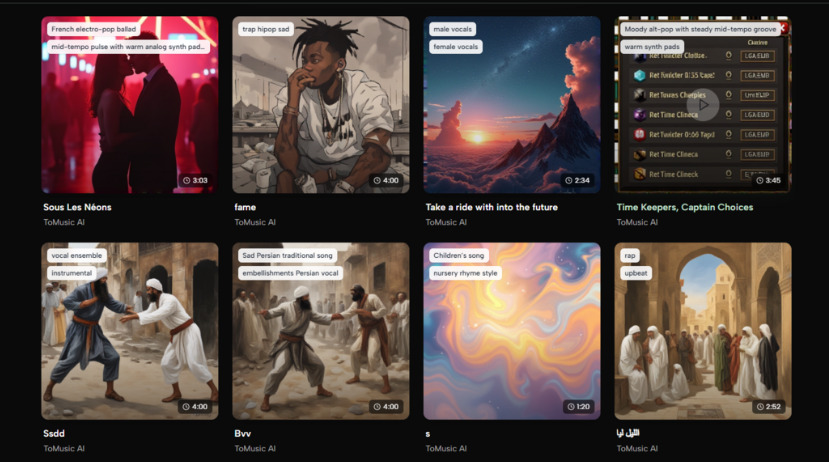

Where This Workflow Is Most Effective

Not all creative tasks benefit equally from this approach.

Fast Content Production

Ideal for:

- social media videos

- background tracks

- short-form conten

Concept Development

Useful for:

- testing musical ideas

- exploring styles

- building rough drafts

Non-Musicians Entering Audio Creation

People without training can:

- produce usable tracks

- experiment freely

- learn through iteration

What Still Requires Human Judgment

Despite automation, several aspects remain human-driven.

Evaluating Emotional Accuracy

The system may:

- approximate mood

- but miss subtle nuance

Selecting The Best Version

Multiple outputs require:

- comparison

- subjective judgment

Refining Creative Direction

Users must still decide:

- what “feels right”

- what aligns with their vision

Limitations That Shape Real Usage

The system is powerful, but not complete.

Prompt Sensitivity

Small wording changes can:

- significantly alter results

- produce inconsistent outputs

Lack Of Detailed Editing

Users cannot:

- isolate individual instruments

- fine-tune mix levels

Dependence On Iteration

High-quality results often require:

- multiple generations

- gradual refinement

These constraints suggest that the tool is best used as an exploratory system.

Why This Changes Creative Thinking

The biggest shift is not technical—it is cognitive.

From Execution To Direction

Users move from:

- “how do I build this”

to:

- “what do I want this to feel like”

From Skill To Expression

The emphasis shifts toward:

- clarity of intent

- descriptive ability

From Linear Process To Feedback Loop

Creation becomes:

- input → output → adjustmen

rather than:

- plan → execute → finalize

A Different Kind Of Creative Skill

Using systems like this effectively requires:

- precise language

- iterative thinking

- openness to variation

It is less about mastery of tools and more about:

- guiding outcomes

Closing Perspective On This Shift

The emergence of AI-driven music tools does not remove complexity—it redistributes it.

Instead of being hidden in software interfaces, complexity now exists in:

- how we describe ideas

- how we evaluate results

- how we refine intent

And that might be the most important change of all.

Because it suggests that creativity is no longer limited by technical skill—but shaped by how clearly we can express what we imagine.